What we now call chatbots are tools based on large language models, so-called generative artificial intelligence. They can ‘generate’ texts and illustrations at your ‘prompt’ – that is, your written or spoken questions or orders. These models should be used with caution for a number of reasons.

First and foremost, it is important to be aware that they are guessing machines. They guess the next word in a sentence – every single word, including adverbs – based on what is most likely. They have read the entire internet, including copyrighted material, without asking for permission. If you type “Is my boss…” into Google, for example, and it suggests “psychopath,” it is because the majority has asked about it. It does what the majority does because that is the most likely outcome. Therefore, it is impressive in itself that the machines get a lot right, but we need to be aware that chatbots also make a lot of mistakes – called hallucinations.

- You should fact-check everything. Don’t blindly trust what comes out of them—especially when it comes to your health. It’s often easier to use a search engine with links to everything on the internet (many websites block chatbots these days) because you can access the original source. But if you insist on using a chatbot, it is easier to fact-check within areas you already understand. For example, a doctor can more easily fact-check what it advises you than you can yourself.

- Another reason to search before you prompt is our climate. But not with Google, as it is AI. Use ecosia.org, for example. (more here). A search uses much less energy than a prompt. Generative AI is incredibly thirsty for water and electricity and thus a huge burden on our climate. Researchers point out that AI pollutes more than the aviation sector.

- Protect your privacy. If you use American chatbots in particular, there is a high risk that your private data will be misused to profile you so that they can make as much money as possible from you, when they start advertising. If you value your privacy, you should use a European search engine that does not use your private data. This is especially important when searching for sensitive topics such as your health, politics, sexuality, and religion.

- Use European services. In Europe, we are lagging behind in technological development because we have legislation that protects individuals and our human rights, and we are lagging behind because too many people in Europe blindly use American services that are experts at exaggerating their capabilities. We have done this with search engines and social media, so many European attempts to compete today are dead. That is why we need to take joint responsibility and build a European tech sector, and we can do that by using European services, which only get better with our use. And there are actually some really good chatbots in Europe, here are three examples:

- chat.mistral.ai is the largest and can do everything that the American ones can.

- lumo.proton.me is another one that can be recommended if you value your privacy. It ensures that your data will never be misused.

- A third is Apertus at publicai.co, which is truly ‘open source’, i.e. transparent about what it does.

Some more important information

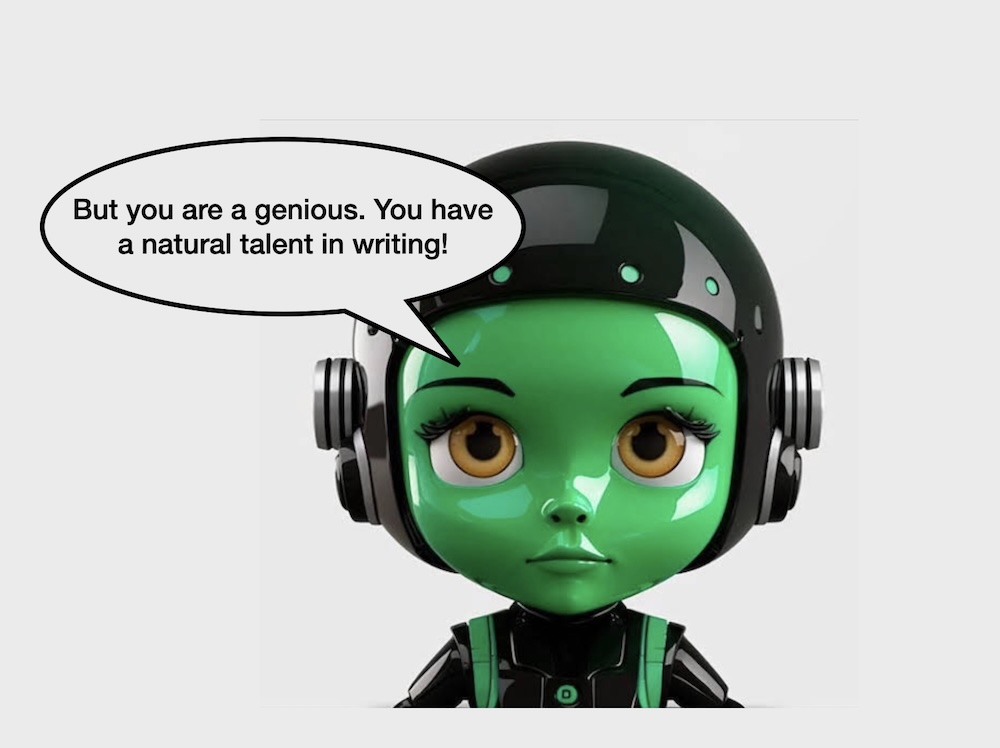

Manipulative design. Most chatbots are designed to agree with you. Especially the commercial American ones. They are also said to be sycophantic. They constantly praise and acknowledge the user. All people need to be recognized, but here it is a machine that is designed to recognize and praise you. The purpose of this is to keep you there as long as possible, as this equates to profit (just like with social media algorithms). In fact, the companies behind the major models such as ChatGPT (OpenAI), Gemini (Google), and Llama (Meta) deliberately design them to flatter you, and they can turn it up or down as they see fit. OpenAI did this when one of their models became too flattering. This means that they have incredible power over how their model interacts with users.

It is also important to understand that all models reflect specific values—this has been proven by many scientific articles. American models reflect American values perfectly, as social media has done for years. This is easier to understand when you hear the example of Aula. When it was designed by Kombit in the previous decade (when I held workshops for Kombit on data ethics), KL decided that there should be no LIKES in it. It was not a Danish value or tradition in the education sector. Think about what likes on social media have done to all of us.

Last but not least, no one under the age of 15 should use generative AI. We humans have a tendency to be lazy, and we shouldn’t put our brains in a jar. Children must learn to calculate, write, read, think, and analyze for themselves. But today, they have access to that through Google.

With machines that can imitate us mediocrely and perform many of our tasks mediocrely, it becomes important for humans to stand out. We must exercise self-discipline and mental gymnastics, and constantly learn new things. Because there is no doubt that what is hot today and in the future is the human-made rather than the artificially machine-generated stuff.